In these cases, we set up the proper canonical tags so the search engines recognize only the page they want.īut if you just want to make sure your duplicate page doesn’t rank at all, excluding search engine robots using the robots exclusion standard may be your best option. For example, we have clients with multiple websites and for particular pages on their site, they would like the main site version indexed.

In particular the tag allows you to explicitly say that the content of the page the spider has hit is duplicate of another more “official” page. You should note that there are other, better, ways to handle duplicate content in general. In some situations, however, you may have a legitimate reason for one page on your site to have content that is duplicative of another page (either yours or not), so to prevent any duplicate content penalties, you may wish to block this duplicate content from search engines. We’ve explained before the importance of having original, rather than duplicate, content. Search Engines often take time to index updated content, so if your content changes regularly, you may feel it important to block the ephemeral content from being indexed to prevent outdated content from coming up in search. If you have content on your site that is ephemeral in nature, changing constantly, you may want to block search engines from indexing content that will soon be obsolete. Thus, if the content is sensitive, you should not only block the content from search engines using the robots exclusion standard but also use an authentication scheme to block the content from unauthorized visitors as well. It is important to note however that using the robots exclusion standard to block search engines from indexing a page does not prevent unauthorized access to the page. In general, content blocked from search engines is available only to people who have been directly given the link. If the content of a particular page is of a private nature, you may want to block this page from being indexed by search engines. As such when you set up a development copy of your site, you should use one or more of the methods below to block your entire development copy from search engine spiders. Because this is an incomplete site and its contents are likely duplicative of the contents of your live site, you don’t want your potential clients finding this incomplete version of your site. The most common way to handle this is to create a development or staging copy of your site and make your changes to this copy before going live with them. Websites can take a long time to create or update, and often you may want to share the progress of the site with other people while you are working on it.

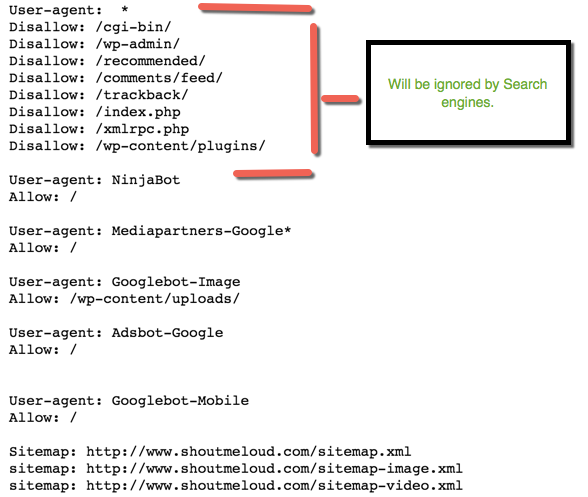

Reasons to Block Content From Search Engines Under Development There are multiple ways to implement the robots exclusion standard, and which one you use will vary depending on the type of content you want to block and your specific goal in blocking it. Fortunately, there is a mechanism in place known as the robots exclusion standard to help you block content from search engines. Learn about the robots exclusion standard, when and how to use it, and some of the nuances associated with using it and other exclusion protocols as part of your overall marketing strategy.Ī key component of Search Engine Optimization is ensuring that your content gets indexed by search engines and ranks well, but there are legitimate reasons you might want some of your content not to be listed in search engines. However, there are certain circumstances when you may not want search engines to index some of your content. In general, web-based marketing is all about getting search engines to index your content.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed